Design and Evaluation of Spatio-Temporal Deep Learning Models for Urban Road Flood Detection

Copyright ⓒ 2025 The Digital Contents Society

This is an Open Access article distributed under the terms of the Creative Commons Attribution Non-CommercialLicense(http://creativecommons.org/licenses/by-nc/3.0/) which permits unrestricted non-commercial use, distribution, and reproduction in any medium, provided the original work is properly cited.

Abstract

This paper proposes a spatio-temporal deep learning model for urban road flood detection using CCTV footage. Two architectures are developed: one based on the ViViT framework and the other employing a CNN–Transformer hybrid design. The models are trained and evaluated on both the Kinetics-400 dataset and real-world CCTV footage. Experimental results indicate that the proposed models outperform the baseline by 8–12% in accuracy. In particular, the ViViT-based model achieves 97% inference accuracy on a combined daytime and nighttime validation set. To improve generalization, data preprocessing and augmentation techniques are applied to reduce visual discrepancies between training and validation data. Furthermore, interpretability analysis is conducted to visualize the models’ decision-making processes, thereby enhancing transparency and reliability. These findings demonstrate the effectiveness and feasibility of spatio-temporal learning for real-time urban flood detection, providing a robust foundation for integration into disaster response systems.

초록

본 논문에서는 CCTV 영상을 활용한 도시 도로 침수 탐지를 위해 spatio-temporal 기반 딥러닝 모델을 제안한다. ViViT 구조와 CNN–Transformer 하이브리드 구조의 두 가지 모델을 설계 및 구현하고, CCTV 데이터와 Kinetics-400 데이터셋을 활용하여 성능을 평가하였다. 실험 결과, 제안하는 모델은 기존 참고 모델 대비 8~12% 향상된 정확도를 보였으며, ViViT 기반 모델은 주야간 통합 데이터셋에서 97%의 추론 정확도를 달성하였다. 또한 학습 및 검증 데이터 간 시각적 차이를 줄이기 위한 전처리와 데이터 증강 기법을 적용하고, 판단 근거 시각화를 통해 모델의 해석 가능성과 신뢰성을 확보하였다.

Keywords:

Urban Road Flood Detection, Deep Learning, Spatio-Temporal Analysis, ViViT Model, CNN-Transformer Hybrid키워드:

도시 도로 침수 탐지, 딥러닝, Spatio-Temporal 분석, ViViT 모델, CNN-Transformer 하이브리드Ⅰ. Introduction

In recent years, the frequency of heavy rainfall events has been increasing worldwide due to abnormal climate phenomena, leading to recurring flood damage and road flood in urban areas. To effectively respond to such disasters, deep learning-based flood prediction and detection methods using CCTV footage have been actively studied. While early research mainly employed video analysis techniques based on 2D or 3D CNNs[1]–[3], more recent approaches have focused on Transformer-based models, which are more capable of capturing complex spatio-temporal dependencies. Models such as ViViT[4] and Transformer architectures[5],[6] have shown strong performance in recognizing intricate spatio-temporal patterns, making them suitable for real-time environmental perception tasks such as flood detection. Additionally, hybrid architectures combining CNNs and Transformers, as well as comparative analyses of their performance, have also been actively explored[7]-[10]. In this paper, we propose a spatio-temporal urban road flood detection model based on Kinetics-400 dataset[11], reflecting these technological advancements. Furthermore, we conduct interpretability analysis to enhance the generalization performance of the proposed model, as suggested in [12],[13].

The structure of this paper is as follows. Section 2 reviews key prior studies on deep learning-based road flood detection and examines recent technological trends. Section 3 provides a detailed description of the proposed spatio-temporal flood detection model, including the process of dataset collection and construction, and presents the detection algorithms, such as the ViViT-based and a CNN-Transformer hybrid model. This section also includes a comparative performance evaluation of the models, an analysis of their generalization capability, and a discussion on future research directions. Finally, Section 4 presents the conclusion of this study.

Ⅱ. Related Previous Studies

The research trends in spatio-temporal analysis algorithms have been changing since 2020, with a notable shift from CNN-based approaches to Transformer-based models in recent years.

Early spatio-temporal analysis models extended conventional 2D CNNs by incorporating a temporal dimension, resulting in 3D CNN architectures capable of capturing both spatial and temporal features[2],[3]. However, 3D CNNs incurred significant computational costs due to their increased complexity. This increase is attributed to the fact that 3D CNNs perform convolution operations along three axes, including the temporal dimension, thereby leading to a cubic growth in computational cost.

In recent years, Transformer-based models have been actively utilized in spatio-temporal analysis, and a representative example is Video Vision Transformer (ViViT)[4],[5]. ViViT is a model proposed by Google in 2021, and is based on Vision Transformer, which introduced the Self-Attention mechanism to image analysis, and was extended to be optimized for video analysis. In early studies, it was confirmed that Self-Attention can effectively model temporal relationships, and the background of ViViT development was to expand the scope of application from single image analysis to continuous frame data such as video[5],[6]. Recent studies have explored hybrid video analysis models that integrate CNNs and Transformers to complement each other strengths[7],[9]. Transformers excel at modeling temporal dependencies through self-attention mechanisms, yet they lack the spatial inductive biases necessary for effective spatial representation[8]. Self-attention enables a global receptive field by computing all pairwise dependencies between input tokens; however, this can introduce inefficiencies in spatial analysis by involving irrelevant regions. Conversely, CNNs provide strong spatial inductive biases via localized convolutional filters, making them well-suited for spatial feature extraction. However, their ability to capture temporal dependencies is limited, as temporal convolutions can only process a narrow range determined by the kernel size, resulting in a restricted receptive field[6],[8]. To address these limitations, CNN–Transformer hybrid models have been proposed. These architectures typically use CNNs for spatial feature extraction and apply Transformers to model temporal relationships based on the extracted spatial features, thereby enabling more effective spatio-temporal representation learning.

Ⅲ. Proposed Spatio-Temporal Deep Learning Models for Road Flood Detection

This section presents the design of a road flooding detection algorithm based on spatio-temporal analysis architectures, such as the ViViT, CNN–Transformer hybrid models discussed in Section 2. The primary objective is to enhance classification accuracy for road CCTV footage under both daytime and nighttime conditions.

3-1 Training Dataset Acquisition

To construct a training dataset for urban road flood detection, raw CCTV video data related to road flooding were collected from two sources: open datasets provided by the Urban Traffic Information Center (UTIC) and publicly available videos on YouTube. The total duration of the collected raw CCTV footage amounts to approximately 1,296 hours, as summarized in Table 1. The videos were segmented into 20-second clips and sampled at 10 frames per second for frame-level analysis.

The video data collected from YouTube were selected based on four criteria to ensure visual similarity with the UTIC footage: (1) direct capture of road surfaces, (2) fixed aerial CCTV viewpoint at a certain height or higher, (3) stationary camera without movement, and (4) inclusion of both normal and flooded road conditions.

To reduce the dataset bias, videos were selected across diverse regions, seasons, weather conditions (clear, rainy, cloudy), and varying traffic densities. Overlapping locations between YouTube and UTIC datasets were excluded to prevent location-specific bias. This sampling strategy also mitigated the risk of overfitting by ensuring that the validation set comprised entirely unseen locations and conditions.

The clip length (20 seconds) and frame rate (10 FPS) were determined based on preliminary experiments and prior work[4],[5]. These settings provided a sufficient temporal context to capture flood-related motion cues while maintaining computational efficiency. Comparative experiments with 5 FPS showed an average 3% drop in detection accuracy, confirming the benefit of the chosen frame rate.

3-2 Training Dataset Construction

A training dataset for the proposed spatio-temporal road flood detection model was constructed as summarized in Table 2. The training data were collected from videos sourced on YouTube. To prevent model bias caused by data imbalance, two criteria were applied in constructing the training dataset. First, for videos representing the same location, the amounts of normal road footage and flooded road footage were balanced to be equal. This prevents the model from being biased toward the class with more samples in the same location. Second, the amount of footage across different locations was kept roughly balanced to avoid bias toward any particular site.

The validation dataset was built from videos collected by the Urban Traffic Information Center (UTIC). To evaluate the model’s generalization performance, only footage from locations not included in the training set was used. From the collected UTIC videos, both normal and flooded road footage were extracted exclusively from CCTV channels where flooding actually occurred.

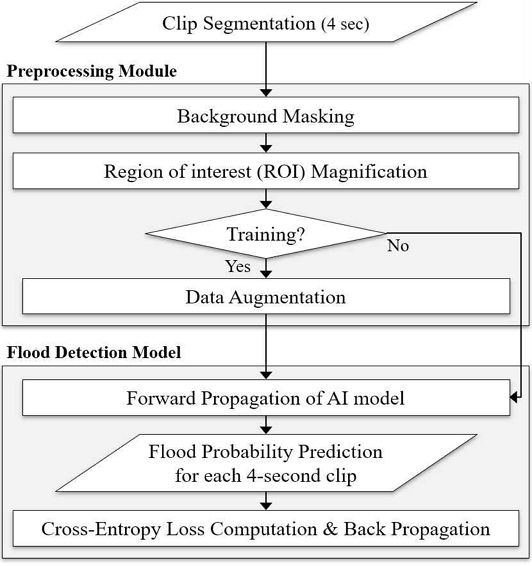

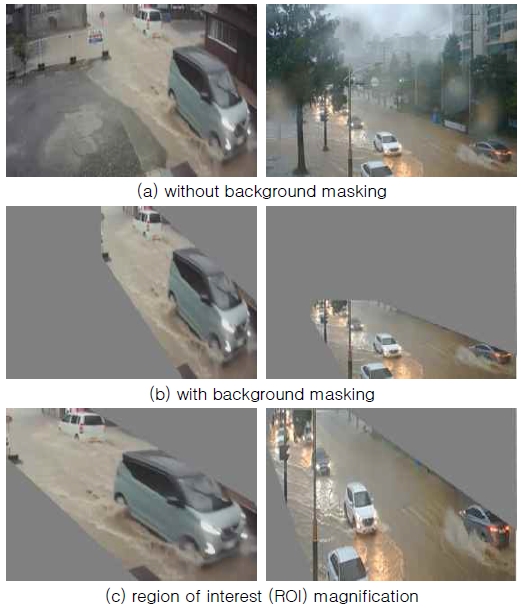

To improve the training and inference performance of the flood detection algorithm, this paper proposes a data preprocessing module, as depicted in Figure 4. The proposed module converts video data into a format suitable for effective processing by AI models. For the model to achieve high inference accuracy on validation data after training on the training data, the visual characteristics between the two datasets must be consistent. Accordingly, a masking module was introduced to remove background information outside the road region within each video frame.

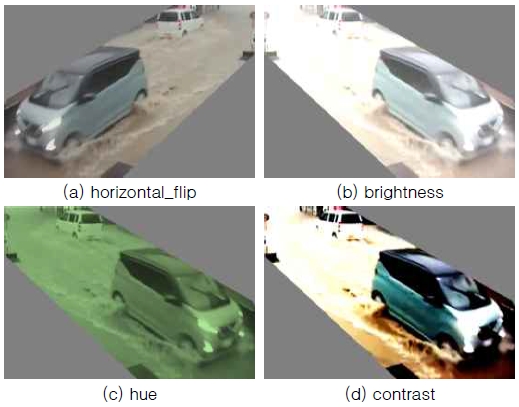

Figure 5(c) presents an enlarged view of the visible video region in the background-masked footage, enabling the model to focus on the region of interest. The gray masked background contains no meaningful information and may adversely affect the model’s training and inference, making it essential to minimize these areas. Additionally, to improve the model’s generalization performance during training, data augmentation was applied to the training dataset as shown in Figure 6.

3-3 Spatio-Temporal Algorithm for Road Flood Detection

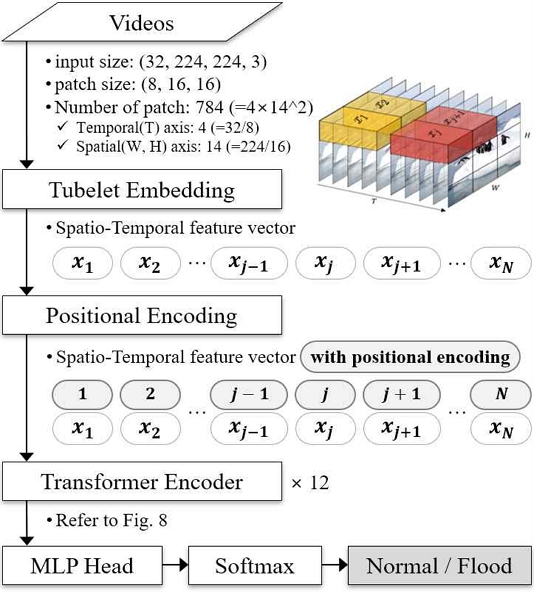

The proposed spatio-temporal flood detection model based on the ViViT architecture consists of three primary modules, namely the tubelet embedder, the positional encoder, and the transformer encoder, as shown in Figure 7. Each module is designed to effectively extract and learn spatio-temporal features from video data. The tubelet embedder module employs a 3D convolutional layer to divide the input video into spatio-temporal patches and generate an embedding vector for each patch. In this paper, the patch size is set to (8, 16, 16), and the input video has a shape of (32, 224, 224, 3), consisting of 32 RGB frames with a resolution of 224×224 pixels. The video is divided into 4 (32/8) segments along the temporal axis and 14 (224/16) segments along each spatial axis, resulting in a total of 784 (4×14×14) spatio-temporal patches. Each patch is represented by an embedding vector whose dimension is equal to the number of filters in the 3D convolutional layer.

The positional encoder assigns spatio-temporal location information to the 784 patch embedding vectors. Although each patch is extracted from a unique spatio-temporal region, the embedding vectors themselves do not contain explicit information about their original positions within the video. As a result, it is difficult to analyze temporal order or spatial layout based solely on the embeddings. To address this, the positional encoder pre-generates positional embeddings corresponding to the 784 spatio-temporal locations and adds them to the patch embeddings, thereby providing explicit location information.

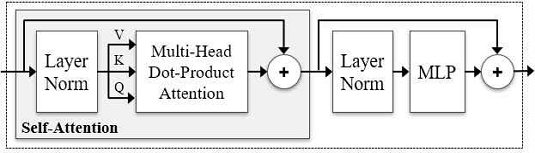

The transformer encoder receives 784 embedding vectors containing both spatio-temporal features and positional information, and applies a self-attention mechanism to infer whether the input video depicts a flooding event. The self-attention algorithm computes the relationships between each vector and all others, allowing the model to identify which spatio-temporal regions are most relevant for the task. This enables joint analysis across spatial and temporal dimensions, and the final flood classification is derived from the attention-weighted representation.

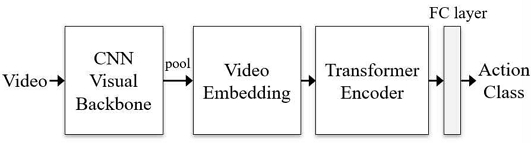

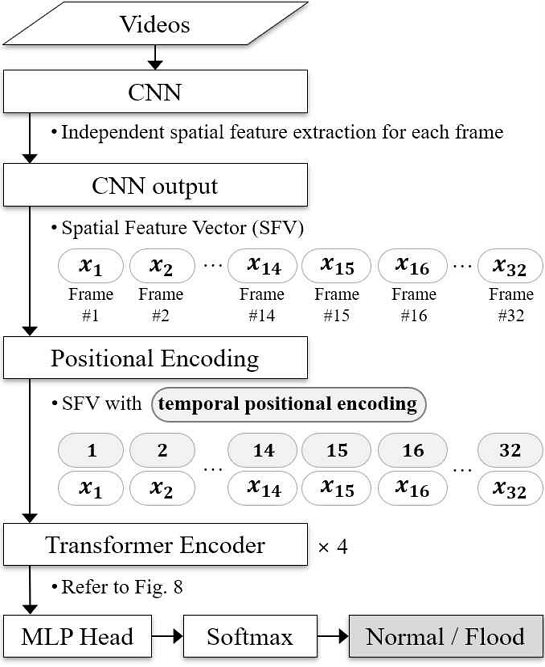

The proposed spatio-temporal flood detection model using CNN-Transformer hybrid model consists of three main modules: CNN, positional encoder, and transformer encoder, as shown in Figure 9. The CNN module extracts spatial features independently from each frame of the input video using 2D convolutional layers. Unlike ViViT, which processes spatial and temporal information simultaneously, this model extracts only spatial features per frame. Since the CNN does not encode temporal order between frames, the positional encoder adds temporal position information to each spatial feature vector. The transformer Encoder then receives these temporally augmented spatial features and analyzes temporal dependencies through self-attention to ultimately determine whether flooding is present in the video.

3-4 Performance Comparison and Evaluation

This section presents the performance evaluation of the deep learning (DL)-based road flood detection models. The development environment for the DL-based spatio-temporal flood detection model proposed in Section 3-3 is summarized in Table 3.

To validate the performance of the proposed models, benchmark experiments were conducted using the publicly available Kinetics-400 video dataset, as described in Section 3. Kinetics-400 is a large-scale dataset developed by Google DeepMind, comprising 400 human action classes. Each action is represented by video clips with an average duration of 10 seconds.

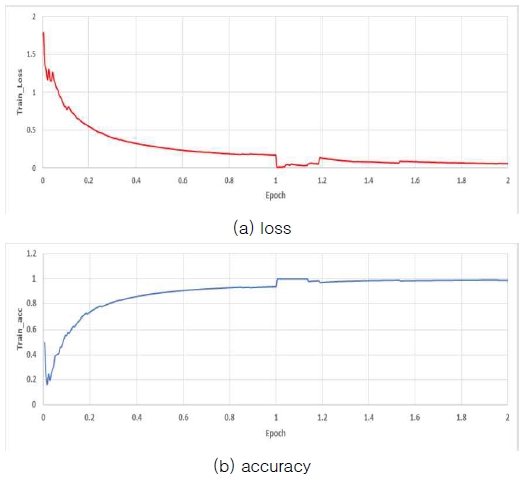

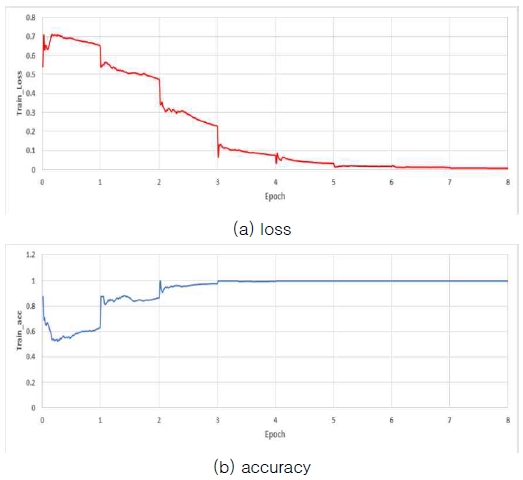

In this paper, 25 classes were selected from the 400 action classes in the Kinetics-400 dataset. For each class, approximately 800 video clips of 10 seconds in length were used as training data for model training and validation. Figures 10 and 11 present the training results of the spatio-temporal flood detection algorithms based on the ViViT and Hybrid architectures, respectively, showing the variations in loss and accuracy during the training process.

Table 4 presents a comparison of accuracy performance between the proposed spatio-temporal flood detection algorithms and the reference model[11]. The proposed ViViT-based and Hybrid-based models achieved accuracies of 88% and 84%, respectively, representing an improvement of approximately 8% and 12% over the reference[11].

As shown in Figures 10 and 11, the ViViT-based flood detection algorithm completed training at 2 epochs, while the Hybrid-based algorithm converged at 8 epochs.As shown in Figures 10 and 11, the ViViT-based flood detection algorithm completed training at 2 epochs, while the Hybrid-based algorithm converged at 8 epochs. At the point of completion, both models achieved 100% inference accuracy on the training dataset. Here, the training completion point was defined as the epoch with the minimum training loss and maximum validation accuracy.

The performance was evaluated by inferring the validation dataset using the model weights at this point, and the results of the proposed spatio-temporal flood detection algorithms are summarized in Table 5.

The nighttime accuracy was lower than daytime performance, particularly for the ViViT-based model. This can be attributed to (1) the smaller proportion of nighttime data in the training set (approximately one-third of daytime volume), (2) lower illumination causing reduced contrast in flood features, and (3) increased sensor noise under low-light conditions. These factors collectively hinder the extraction of discriminative spatial and temporal features.

The proposed ViViT-based spatio-temporal flood detection model achieved inference accuracies of 99% and 79% on the daytime and nighttime validation datasets, respectively. The Hybrid-based model recorded accuracies of 96% and 87% for the same conditions. For the combined daytime and nighttime dataset, the ViViT-based and Hybrid-based models achieved inference accuracies of 97% and 94%, respectively, demonstrating overall high performance.

3-5 Generalization Performance Analysis

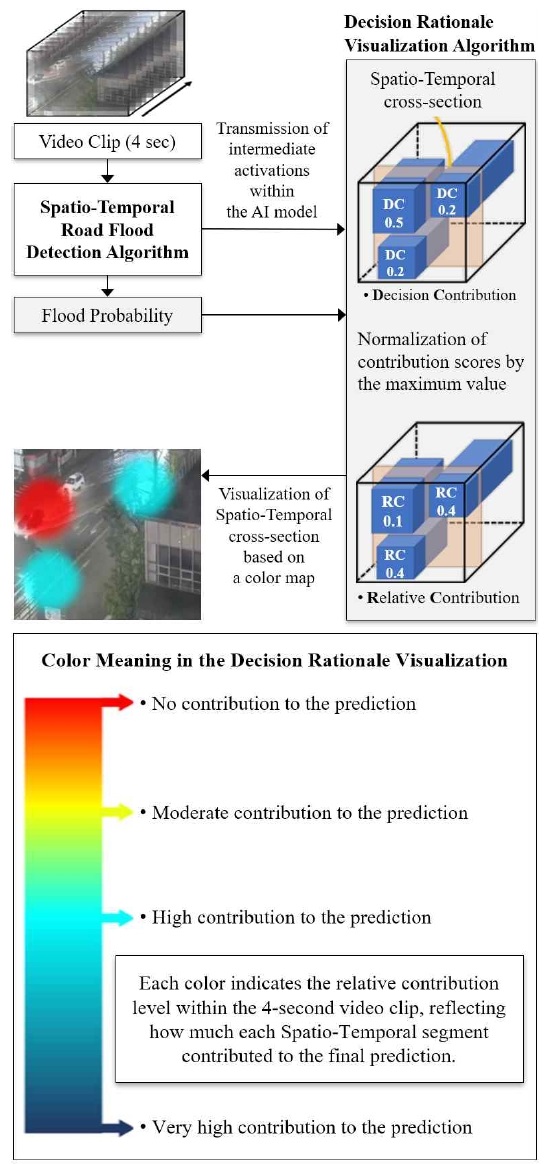

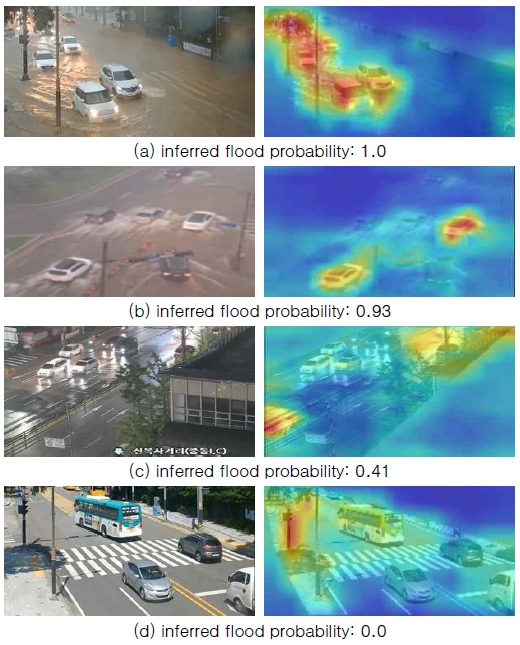

This section presents the generalization performance of the spatio-temporal flood detection algorithms proposed in Section 3.3, and compares the visual similarity between the training and validation datasets[12]. Furthermore, the decision rationale of the flood detection models is visualized to assess whether their predictions align with the predefined criteria for urban road flooding classification. As shown in Figure 12, a 4-second video clip is used as input to compute the contribution of each spatio-temporal point. These contributions are then normalized by the maximum value and visualized using color mapping based on relative contribution levels.

Figure 13 presents the final results of this study, including the original road videos, the visualized decision basis, and the inferred flood probability used for road flood classification. In Figures 13(a) and 13(b), the model predominantly focuses on the water splashes at the front of the vehicle and the ripples propagating toward the sides and rear as the vehicle moves through pooled water. In Figure 13(c), the model's attention is primarily directed to the water surface reflecting light.

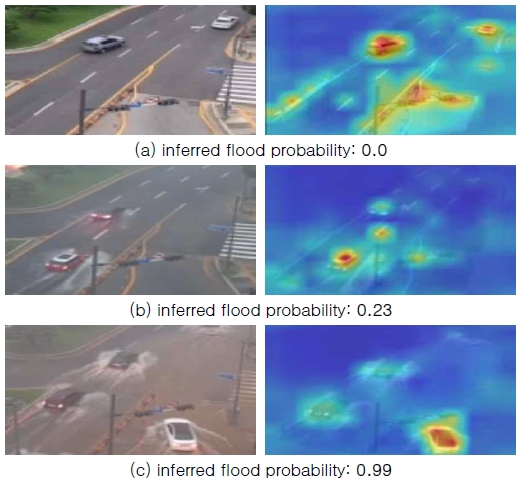

However, in cases where the video is captured from a long distance and the vehicle appears small, the model may fail to extract meaningful flood-related features unless distinct water splashes or ripples are present. The proposed flood detection models process 4-second video clips resized to 224×224 pixels. During this resizing process, features related to small vehicles and subtle flooding cues may be lost, leading to reduced inference accuracy. Figure 14(b) shows a video captured from a long distance, while Figure 14(c) presents the scene with magnification to enhance the visibility of flood features.

3-6 Future Works

For future works, we aim to address the limitations identified in Figure 14, despite the proposed algorithm demonstrating strong generalization performance as shown in Section 3.5. First, we plan to improve inference accuracy in cases where no vehicles are present on the road. Second, we will address cases in which flood features are not effectively captured when a vehicle remains stationary despite road flooding. To address these challenges, we are considering an approach that aggregates the 4-second interval flood probability inferences into longer temporal windows, such as 1-minute or 5-minute intervals, to improve robustness and contextual understanding.

Ⅳ. Conclusions

This study proposed a spatio-temporal deep learning model for real-time road flood detection using CCTV video footage. Two architectures are developed: one based on the ViViT framework and another utilizing a CNN and Transformer hybrid design. Their performance is evaluated using the Kinetics-400 dataset and real-world CCTV footage. The experimental results show notable improvements in accuracy, with the proposed models outperforming the reference model by approximately 8 to 12%. The ViViT-based model, in particular, achieves an inference accuracy of 97% on the combined daytime and nighttime validation dataset.

To improve generalization, data preprocessing and augmentation techniques are employed to minimize visual discrepancies between the training and validation datasets. Interpretability analysis is also conducted to visualize the models decision-making processes, enhancing transparency and reliability. However, certain limitations are identified in videos captured from long distances or in scenes without moving vehicles, where flood-related features are less prominent. Future research will focus on aggregating inference results over extended time windows, such as one or five minutes, to improve robustness and contextual understanding.

This paper demonstrates the practical viability of real-time road flood detection using CCTV by leveraging spatio-temporal deep learning models. These findings are expected to provide a solid foundation for applying such models into urban disaster response systems, contributing to timely and automated flood monitoring.

Acknowledgments

This work was supported by Korea Planning & Evaluation Institute of Industrial Technology funded by the Ministry of the Interior and Safety (MOIS, Korea). [Development and Application of Advanced Technologies for Urban Runoff Storage Capability to Reduce the Urban Flood Damage / RS-2024-00415937]

References

-

C. Feichtenhofer, H. Fan, J. Malik, and K. He, “SlowFast Networks for Video Recognition,” in Proceedings of 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, pp. 6201-6210, 2019.

[https://doi.org/10.1109/ICCV.2019.00630]

-

D. Kondratyuk, L. Yuan, Y. Li, L. Zhang, M. Tan, M. Brown, and B. Gong, “MoViNets: Mobile Video Networks for Efficient Video Recognition,” in proceedings of 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville: TN, pp. 16015-16025, 2021.

[https://doi.org/10.1109/CVPR46437.2021.01576]

-

C. Feichtenhofer, “X3D: Expanding Architectures for Efficient Video Recognition,” in proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle: WA, pp. 200-210, 2020.

[https://doi.org/10.1109/CVPR42600.2020.00028]

-

A. Arnab, M. Dehghani, G. Heigold, C. Sun, M. Lučić, and C. Schmid, “ViViT: A Video Vision Transformer,” in proceedings of 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal: Canada, pp. 6816-6826, 2021.

[https://doi.org/10.1109/ICCV48922.2021.00676]

-

A. Dosovitskiy, L. Beyer, A. Kolesnikov, D. Weissenborn, X. Zhai, T. Unterthiner, ... and N. Houlsby, An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale, arXiv:2010.11929, 2021.

[https://doi.org/10.48550/arXiv.2010.11929]

-

G. Bertasius, H. Wang, and L. Torresani, “Is Space-Time Attention All You Need for Video Understanding?,” in Proceedings of the 38th International Conference on Machine Learning (PMLR), 2021.

[https://doi.org/10.48550/arXiv.2102.05095]

-

G. Sharir, A. Noy, and L. Zelnik-Manor, “An Image is Worth 16x16 Words, What Is a Video Worth?,” arXiv:2103.13915, , 2021.

[https://doi.org/10.48550/arXiv.2103.13915]

-

M. C. Leong, H. Zhang, H. L. Tan, L. Li, and J. H. Lim, “Combined CNN Transformer Encoder for Enhanced Fine-grained Human Action Recognition,” arXiv:2208.01897, , 2022.

[https://doi.org/10.48550/arXiv.2208.01897]

-

N. Carion, F. Massa, G. Synnaeve, N. Usunier, A. Kirillov, and S. Zagoruyko, “End-to-end Object Detection with Transformers,” in Proceedings of the 16th European Conference on Computer Vision, Glasgow: UK, pp. 213-229. 2020.

[https://doi.org/10.48550/arXiv.2005.12872]

-

Y. Chen, H. Fan, B. Xu, Z. Yan, Y. Kalantidis, M. Rohrbach, ... and J. Feng, “Drop an Octave: Reducing Spatial Redundancy in Convolutional Neural Networks with Octave Convolution,” in Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul: Korea, pp. 3435-3444, 2019.

[https://doi.org/10.48550/arXiv.1904.05049]

-

J. Carreira and A. Zisserman, “Quo Vadis, Action Recognition? A New Model and the Kinetics Dataset,” in Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu: HI, pp. 4724-4733, 2017.

[https://doi.org/10.1109/CVPR.2017.502]

-

H. Chefer, S. Gur, and L. Wolf, “Transformer Interpretability Beyond Attention Visualization,” in Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville: TN, pp. 782-791, 2021.

[https://doi.org/10.1109/CVPR46437.2021.00084]

-

S. Abnar and W. Zuidema, “Quantifying Attention Flow in Transformers,” in proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pp. 4190-4197, 2020.

[https://doi.org/10.18653/v1/2020.acl-main.385]

저자소개

2015.08:Civil Engineering, Graduate School of Inha University (Ph.D Degree)

2007.10~2008.10: Korea Infrastructure Safety & Technology Corporation (KISTEC)

2015.10~Present: Senior Researcher in Dept. of Hydro Science and Engineering Research, Korea Institute of Civil Engineering and Building Technology (KICT)

2022.08~Present: Senior Researcher in Research Strategic Planning Department, KICT

※Research Interests:Hydrology, Flood Forecasting, Weather Radar, Machine Learning, AIoT

2016.08:Civil Engineering, Graduate School of Inha University (Ph.D Degree)

2017.03~2018.10: Postdoctoral researcher in Dept. of Hydro Science and Engineering Research, Korea Institute of Civil Engineering and Building Technology (KICT)

2018.11~Present: Senior Researcher in Dept. of Hydro Science and Engineering Research, KICT

※Research Interests:Hydrology, Flood Forecasting, Rainfall radar

2009.08:Civil Engineering, Graduate School of Korea University (Ph.D Degree)

2010.05~2020.04: Senior Researcher in Dept. of Hydro Science and Engineering Research

2020.04~Present: Research fellow in Dept. of Hydro Science and Engineering Research, KICT

※Research Interests:Hydrology, Flood Forecasting, Rainfall radar

2010.08:Department of IT Engineering, Graduate School of Mokwon University (Ph.D Degree)

2012.03~2022.02: Professor in Dept. Computer Engineering, Mokwon University

2021.04~2021.12: Visiting Researcher in Dept. of Hydro Science and Engineering Research, KICT

2022.03~2024.02: Professor in Dept. of Computer Information, Osan University

2023.04~2023.12: Visiting Researcher in Dept. of Hydro Science and Engineering Research, KICT

2024.03~Present: Professor in School of Computing and Artificial Intelligence, Hanshin University

※Research Interests:Computer Vision, Deep Learning, Disaster Prevention Application